Feature Description

A ground control point (GCP) is a control point located at a specific position on an image and on a specific target, possessing coordinate information in the mapping coordinate system. Due to its high-precision spatial coordinate data, it can be used in processes such as remote sensing imagery geometric correction, positioning accuracy verification, and spatial registration to achieve high-precision geographic referencing and location tracking of image data.

Supported starting from version 11i(2023) of SuperMap iDesktopX.

Function Entry

Satellite Image Processing tab->Geometric Correction group->Generate Ground Control Points.

Parameter Description

- Input Image Type: Select the type of imagery to participate in generating control points. The default is Panchromatic Image. It can also be switched to Multispectral Image, Forward-Looking Image, Rear-View Image, or Front View and Rear View Image based on the specific image type.

- Planar Accuracy: The planar accuracy of the image determines whether preprocessing is required during control point matching.

- Low: Generally indicates an image planar accuracy error greater than 40 pixels, requiring preprocessing.

- High: Generally indicates an image planar accuracy error less than 15 pixels, not requiring preprocessing.

- Medium: Choose this when the image accuracy cannot be determined; the program will automatically estimate the accuracy.

- Error Threshold: Sets the error threshold for outlier removal in image matching. The value range is [0,40], default is 5, unit is px. A larger threshold preserves more control points but increases the likelihood of retaining erroneous points.

- Point Distribution Mode: Selects the point distribution pattern, offering Conventional and Uniform methods.

- Conventional: Divides each scene into N*M sub-regions, then selects n image blocks of size 512*512 from each sub-region for control point generation. The generated ground control points will attempt to cover the entire image.

- Number of Blocks in Column Direction: The number of blocks each scene is divided into in the column direction. Default value is 4.

- Number of Blocks in Row Direction: The number of blocks each scene is divided into in the row direction. Default value is 4.

- Matching Method: Provides the following six matching methods, which can be selected based on data characteristics and requirements. Among them, the AFHORP and RIFT methods support multi-modal data matching; CASP and DEEPFT are based on deep learning and require additional AI model configuration and CUDA environment installation; generally, it is recommended to use MOTIF, CASP, or DEEPFT.

- MOTIF (Default): A template matching algorithm for multi-modal imagery, characterized by its lightweight feature descriptor. MOTIF can overcome nonlinear radiometric distortion caused by differences between SAR and optical images.

- CASP: A novel cascaded matching pipeline that benefits from integrating high-level features, helping to reduce the computational cost of low-level feature extraction. The pipeline decomposes the matching stage into two progressive phases, first establishing one-to-many correspondences at a coarser scale as cascaded priors. Then, these priors are utilized for guidance to determine one-to-one matches at the target scale.[1]

- DEEPFT: A deep learning-based image matching method.

- SIFT: A method for extracting distinctive invariant features from images, which can be used for reliable matching between objects or scenes under different viewing angles.

- RIFT: A feature matching algorithm robust to large-scale nonlinear radiometric distortion. It can enhance the stability of feature detection and overcome the limitations of feature description based on gradient information.

- AFHORP: A feature matching algorithm for multi-modal imagery. AFHORP exhibits strong resistance to radiometric distortion and contrast differences in multi-modal images and performs excellently in addressing issues like orientation reversal and phase extremum mutation.

- Maximum Number per Block: The maximum number of points to retain within a block during image matching. Value range is [1,2048], default is 256.

- Uniform: The generated tie points will be evenly distributed in the overlap area. The number of points is fewer than with regular distribution, but the distribution is more uniform, suitable for situations where internal distortion of the imagery is significant.

- Number of Seed Points: Sets the number of seed points for conjugate point matching on each scene image. Value range is [64,6400], default is 512. When the image texture is poor, the number of control points needs to be increased to ensure enough points are matched, improving subsequent imagery quality.

- Seed Point Search: Sets the method for searching for seed points. Provides Raster Center Point and Corner Point methods, default is Corner Point.

- Corner Point: Selects points with distinctive features within the selected region as seed points.

- Raster Center Point: Uses the center point of the raster as the seed point; this search method is random.

- Template Size: Sets the interval size between seed points. Value range is [1,256], default is 40, unit is px. A larger template makes the search for points more reliable but increases processing time.

- Search Radius: Sets the search radius for seed points in image matching. Value range is [0,256], default value is 40, unit is px. A larger search radius increases the matching range but also increases processing time.

- Conventional: Divides each scene into N*M sub-regions, then selects n image blocks of size 512*512 from each sub-region for control point generation. The generated ground control points will attempt to cover the entire image.

- Semantic Culling of Non-Ground Points: Can automatically cull control points in cloud areas and building areas based on AI semantic technology.

- Cloud Area: This parameter appears after selecting Semantic Culling of Non-Ground Points. Selected by default, meaning tie points within cloud areas will be automatically culled based on the set dataset. If not selected, tie points in cloud areas are retained. The dataset must contain an ImageName field, and the name must correspond to the image currently to be processed.

- Building Area: This parameter appears after selecting Semantic Culling of Non-Ground Points. Selected by default, meaning building areas will be automatically identified and tie points in these areas will be culled. If not selected, tie points in building areas are retained.

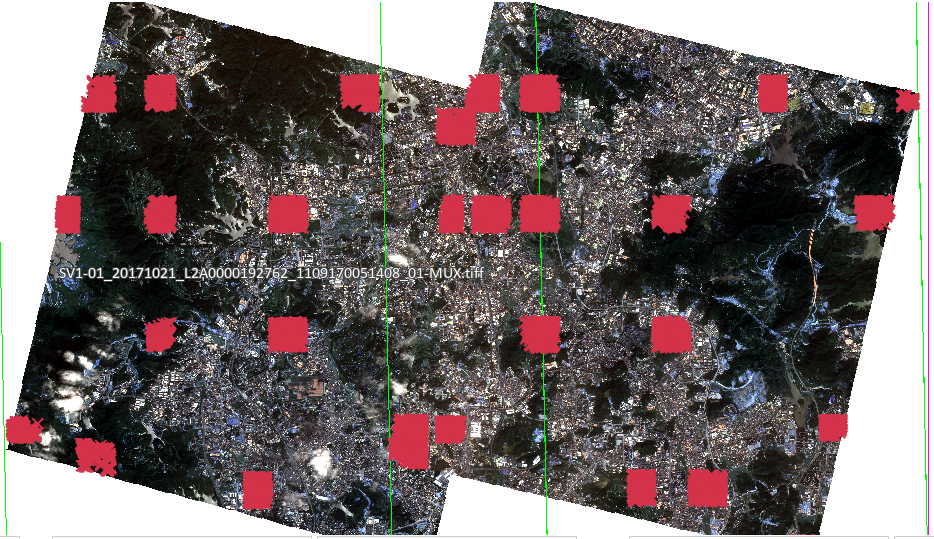

|

| Figure: Automatically Generated Ground Control Points |

Related Topics

References

[1] Chen, P., Yu, L., Wan, Y., Pei, Y., Liu, X., Yao, Y., ... & Zhang, Y. (2025). CasP: Improving Semi-Dense Feature Matching Pipeline Leveraging Cascaded Correspondence Priors for Guidance. In Proceedings of the IEEE/CVF International Conference on Computer Vision (pp. 28063-28072).